Smart Grid and Microgrid

From NUEESS

Contents |

Description

Background

Sustainability has become an imperative requirement on many infrastructures and systems of our society with the impending energy crisis and environment deterioration. Power grids are being transformed into Smart Grid with advanced sensors, information and communication technologies. In smart grid, the energy flow will become two-way between the grid and consumers with renewable energy generations, which will be monitored and controlled by sensors, smart meters, digital controls and analytical tools. Smart Grid usually introduces Demand Response (DR) and Distributed Generation (DG) to improve energy consumption and generation efficiency.

Purpose

- Community level Microgrid based system scheme is designed to make the sustainable energy usage more affordable for ordinary residents.

- Dynamic residential DR with enhanced user-agent interaction is designed for user's preference adaption.

- Shared Cost-led Micro Combined Heat and Power (CHP) management strategy is designed to increase the DG utilization and reduce energy consumption cost.

- Vanadium Redox Battery (VRB) is management for environment adaptive discharging.

System Scheme

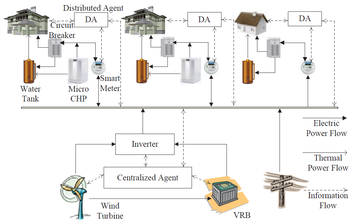

The Microgrid is invested and shared by a cluster of residents. According to ratios of investment, residents subscribe certain amount of power from the microgrid with low price for unpaid investment cost, fuel cost and management cost. There are three types of flow in the microgrid: electric power flow, thermal power flow and information flow. The electric power is powered by both utility grid and internal hybrid energy generated by wind turbine, micro CHP systems, and battery discharging. Micro CHP is a smaller size CHP for residential generation. For general consideration, some houses in the community are equipped with micro CHP systems, whereas others are not. For the latter, thermal energy can only be generated by electric heat pump. The thermal energy will be stored in the form of hot water in the tank and can be consumed in the future. VRB is chosen to store surplus wind energy and discharge when the wind energy is insufficient for the demand to reduce the energy consumption cost.

The management of the microgrid is based on hierarchical optimization and bill balance. The hierachical optimization is based on centralized and distributed agents and has 3 steps.

- Dynamic residential DR for energy consumption cost reduction, high user's convenience rate and user's preference adaption.

- Shared cost-led micro CHP generation management for cost reduction.

- Environment adaptive VRB discharging management based on Reinforcement Learning for cost reduction considering stochastic elements.

System Models

Dynamic Residential DR

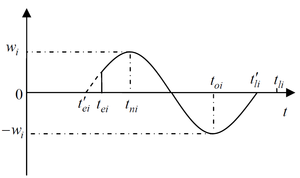

Electricity demands are represented as schedulable and fixed energy consumption tasks. Dynamic DR focuses on the schedulable load. Schedulable tasks have their own convenience rate distributions, which reflect the satisfaction users can get when tasks are executed at different time.

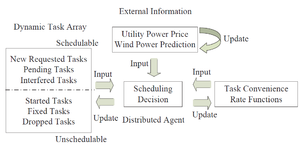

The model of dynamic DR consists of dynamic task array, external information and task convenience rate function as inputs, and generate task scheduling as outputs. After scheduling, only tasks scheduled in current decision period are executed. Others will be rescheduled in the next decision period with updated external information.

The convenience rate function comprises Basic function and Reward/Penalty function. The basic function is used for initialization. The Reward/Penalty function serves as agents’ reaction towards user’s interference and is used to update the convenience rate function dynamically. The user may attempt to change task execution time scheduled by the agent. The new execution time is considered as the new comfort time for the user and will be given a maximum convenience rate increase. The old scheduled time will be penalized by decreasing its convenience rate with the same value.

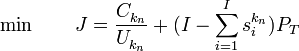

DR agent will optimize the following cost function considering both energy consumption cost and user's convenience:

The problem is solved by using Particle Swarm Optimization (PSO) algorithm.

Shared Cost-led CHP Management

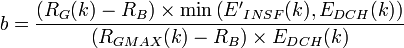

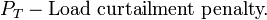

In this section, the generation of micro CHP systems and power consumption of electric heat pumps are optimized to minimized the energy consumption cost of the whole community. The constraints include the maximum electric power of each house, thermal power balance, micro CHP fuel consumption range and heaters' power range. The optimization problem at nth decision period can be formed as:

This optimization problem is also solved by using PSO algorithm.

Environment Adaptive VRB Management

In this step, a centralized VRB management is introduced to store surplus wind energy and discharge when there is high demand in the community. VRB has three statues: charging, discharging and idle. The charging management is simple: when there is surplus wind power, VRB is charged if it is not full. So the main problem of VRB management is to find the optimal discharging policy to compensate the unfed load after the second step and reduce cost. The management also considers stochastic elements, including stochastic load and wind power supply.

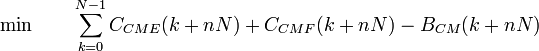

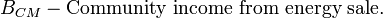

The process dynamics of VRB management based on decision making can also be modeled as a stochastic Markov Decision Process (MDP).The state space includes utility electricity price, forecasted wind power, load unfed by internal supply, and DOD of VRB:

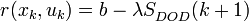

The action of the centralized agent is to decide the percentage of the load unfed by internal supply. The reward function for action evaluation includes normalized cost reduction and the reliability level (energy backup):

In this step, the online reinforcement learning is realized by using Q-learning algorithm.

People

Publications

- B. Jiang and Y. Fei, “Smart home in smart microgrid: A cost-effective energy ecosystem with intelligent hierarchical agents,”IEEE Trans. on Smart Grid, vol. 6, no.1, pp.3-13, Jan. 2015.

- B. Jiang and Y. Fei, “Dynamic residential demand response and distributed generation management in smart microgrid with hierarchical agents”, in Proc. IEEE Int. Conf. on Smart Grid and Clean Energy Technologies, Sep. 2011.

| Whos here now: Members 0 Guests 1 Bots & Crawlers 1 |

![x(k) = {\left[ {\begin{array}{*{20}{c}}

{{R_G}(k)} & {{P_{WIND}}(k)} & {{E_{INSF}}(k)} & {{S_{DOD}}(k)} \\

\end{array}} \right]^T}](/nueess/images/math/2/7/5/27569a80866b8166cb1b67669e904a9c.png)